sidebar.joinAskTableCommunity

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

More teams call cloud models such as DeepSeek or Qwen over APIs for Q&A, analytics, and reporting.

A common concern from engineers and security leads:

“If we send business data to the cloud for the model to process, is it really safe?”

Short answer:

If you send raw data with no protection, privacy risk is real.

Can you use powerful cloud models and keep data safe?

Yes. AskTable’s SDI (de-identification) feature is built for this.

When you call a cloud API with a question like “Analyze spending for phone 13812345678,” that number leaves your network.

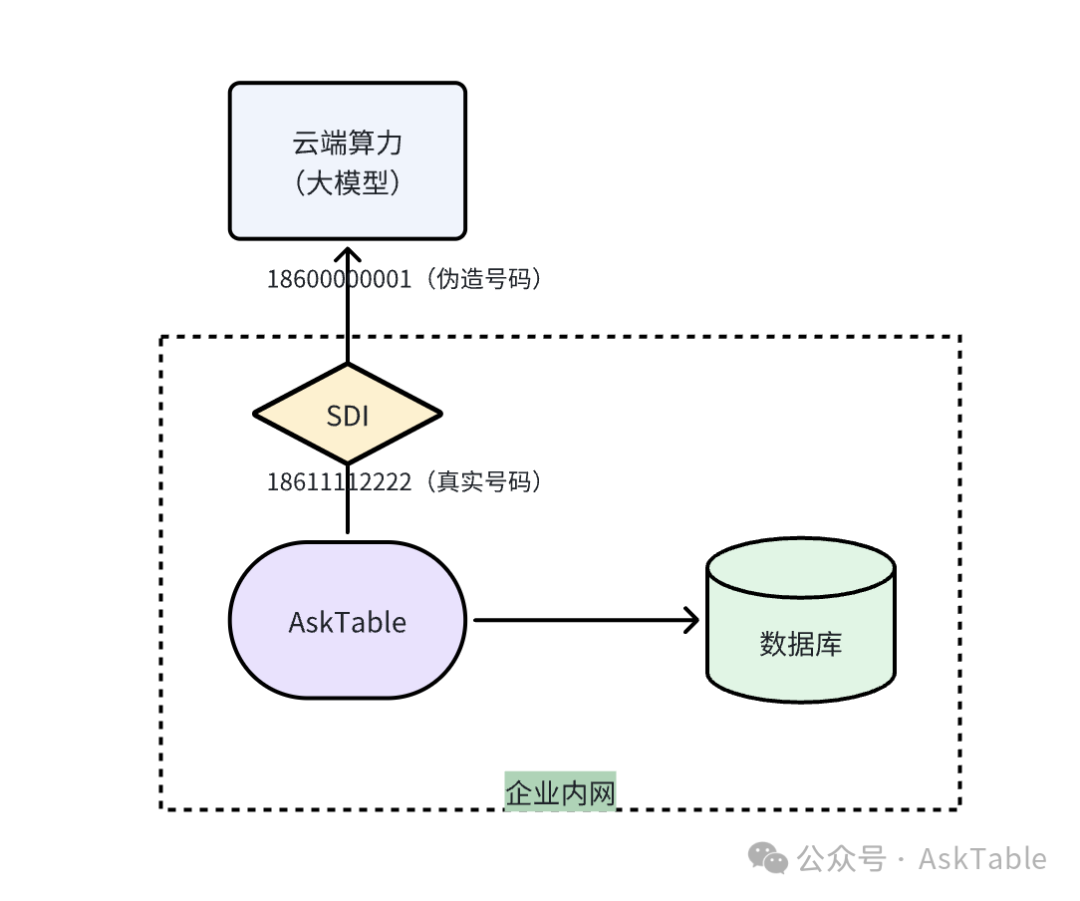

For self-hosted AskTable, SDI (Secure De-Identification Inference) keeps sensitive values local.

Core idea:

Sensitive data never leaves as-is—what goes out is synthetic stand-in data.

Example: you query user 18611112222. Before the LLM call, SDI may replace it with 18600000001. The real number stays on your network; the model only sees a meaningless substitute.

When you need a report, AskTable can map values back locally so workflows stay intact.

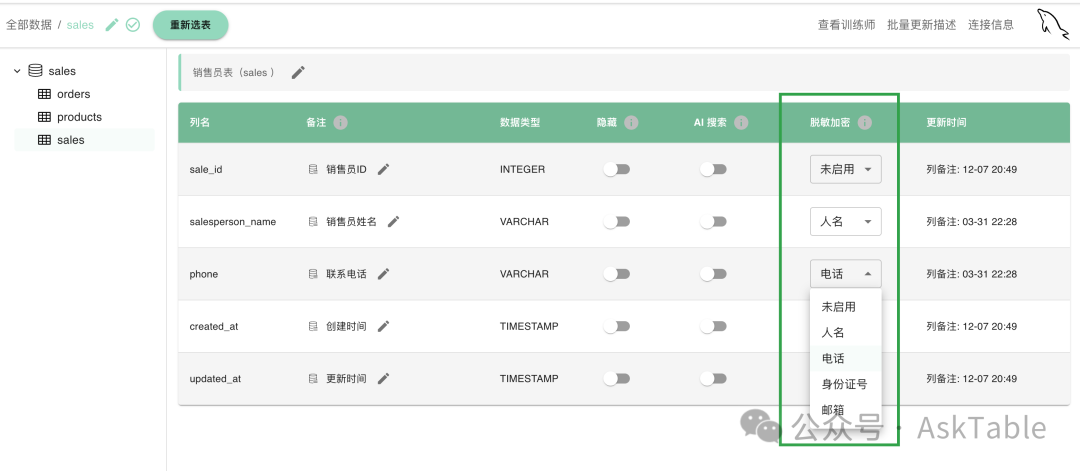

| Field type | Example |

|---|---|

| Name | Common name patterns |

| Phone | 11-digit mobile numbers |

| National ID | 18-digit ID numbers |

| user@example.com | |

| Bank card | Card numbers |

You can toggle masking per field and choose synthetic formats in AskTable.

Two extremes:

SDI offers a practical balance:

Security and AI adoption both matter. AskTable SDI is a pragmatic way to combine them.

If you want affordable AI without giving up data control, try SDI in AskTable.

sidebar.noProgrammingNeeded

sidebar.startFreeTrial