sidebar.joinAskTableCommunity

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

Before entering the AI field, I was always engaged in model and algorithm development, holding a very traditional Computer Science (CS) perspective. With the prevalence of large models, we observed that AI has made the fastest progress in programming (like Cursor, Claude code), because in this closed loop, we develop, use, test and optimize data ourselves. At that time, we naturally had an idea: since AI performs so amazingly in the code field, in the big data analysis field, wouldn't AI be "dimensionality reduction combat"?

But in the actual implementation process, we found this idea too idealistic.

In the past year, many Text-to-SQL or Chat-to-Data products have emerged in the industry. The technical logic seems perfect: user asks question -> AI translates -> database executes -> returns result. But in actual implementation, the AskTable team identified two core pain points, which prompted a fundamental adjustment of the technical route.

Ideally, AI is a bridge connecting business personnel and databases. But in reality, implementing an AI data project requires coordinating business parties (requirements), data teams (data standards), Ops teams (permissions) and bosses (results). Technical support teams are often drowned in cross-departmental communication noise. AI hasn't automatically eliminated the barriers of organizational structure; instead, due to high requirements for data accuracy, it has increased communication costs caused by missing context.

This is a deeper technical philosophy issue.

Conclusion: Conversation (Chat) is not how humans think about data. Forcing linear conversation to carry divergent analytical thinking adds to users' cognitive load rather than reducing it.

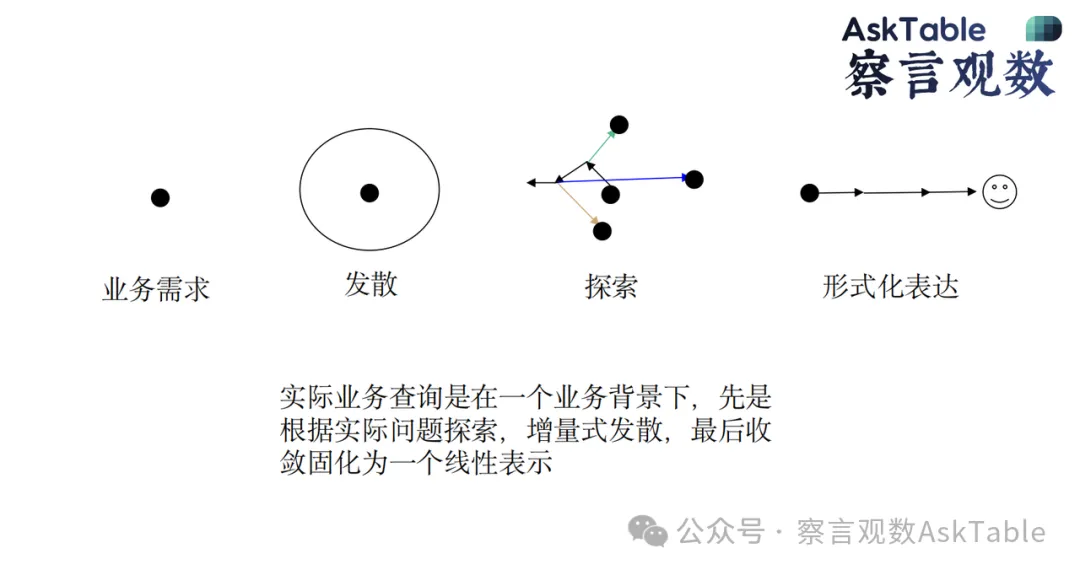

Through observing user feedback, we realized: conversation is not how humans think about data.

Business queries usually follow a process of "receiving requirements -> diverging -> exploring -> converging". But conversation is linear—ask question A, get result B. If you want to look back and do horizontal exploration, the dialog box becomes very clumsy. Conversation cannot accommodate the analyst's divergent exploration methods.

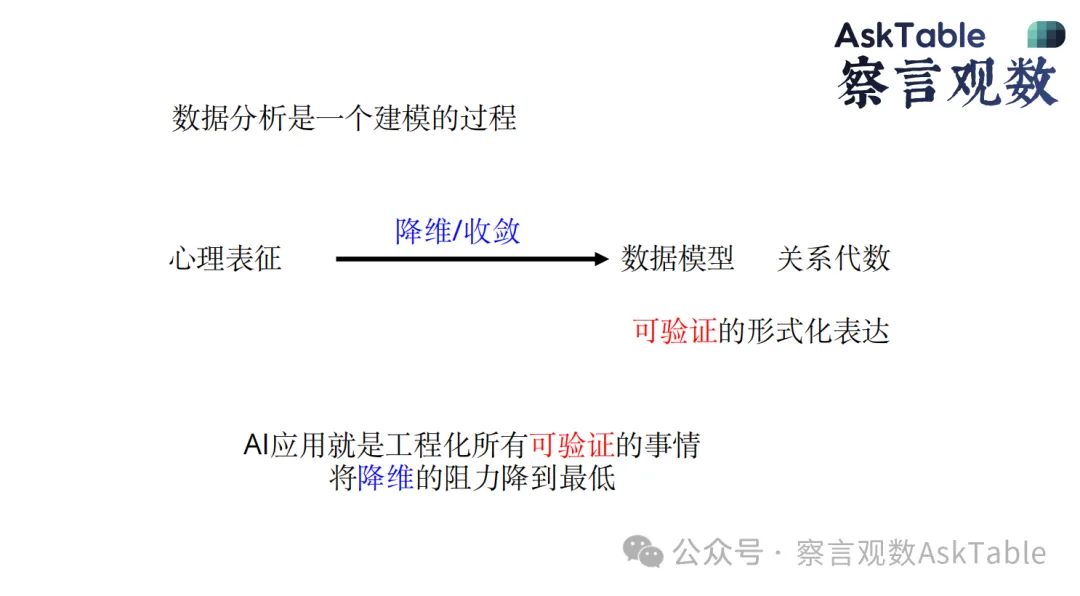

We believe that data analysis is essentially a modeling process. Humans have an internal "mental representation" of the world and business, which is difficult to verbalize. The analysis process uses means such as jotting down notes, dragging, SQL or code to gradually "reduce the dimensionality" of this complex mental representation, ultimately converging to a specific scenario, giving a chart, a conclusion or a decision reference.

What the user says is often the "simplified expression" after filtering countless ideas in their mind—it is not the truest thinking about data.

How to define AI's responsibilities in the system is crucial. AskTable proposes a clear set of engineering principles: AI applications are about engineering all "verifiable" things.

AskTable's strategy is: never let AI "guess" unverifiable things (like generating a fancy dashboard out of thin air), but use AI's extremely low marginal cost to solve all verifiable code generation and data processing tasks.

In enterprise-level data scenarios, accuracy is the lifeline. AskTable emphasizes:

To achieve "what you think is what you get," we conducted two core explorations in the product:

Before exploring new products, we did an experiment, trying to place the Agent in a fusion environment integrating Python, SQL, DuckDB, VectorSearch, JavaScript and other tools. Give it a goal, let it try, feedback, iterate on its own, and generate in-depth reports containing thinking processes (Plan). Although this is not yet fully production-ready, it validated that AI, given tools and initial conditions, can give unexpectedly high-quality results.

This is our recently internally tested core feature, designed to break the linear shackles.

Different from traditional BI's drag-and-drop or ChatBI's Q&A, AskTable's Canvas abstracts the analysis process into orchestratable nodes. The entire product is composed of "nodes," representing data flow through points and edges, rather than simple Q&A history.

In Canvas, users can explicitly define AI's Context by drawing connections or selecting multiple nodes.

This architecture turns the analysis process into a visual mind map. Users are no longer limited by programming languages or a single conversation window, but complete the mapping from "mental representation" to "formal expression" through node combinations.

In technical implementation, AskTable always follows these principles:

Writing an Agent is not difficult, but at this point in 2025, exploring new possibilities in human-AI interaction is the real challenge. We don't want to separate humans and AI, but to encapsulate trivial verifiable work within AI, freeing people for thinking exploration.

AI is responsible for tedious, verifiable logic, humans focus on abstract thinking and business exploration.

We are looking for partners who are sensitive to business and data to participate in our Canvas feature internal testing. If you have any ideas, or want to learn from our approach, welcome to exchange with us.

The AskTable team is committed to exploring the next frontier in human-AI collaboration.

sidebar.noProgrammingNeeded

sidebar.startFreeTrial