sidebar.joinAskTableCommunity

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

If you're selecting an AI data analysis tool for your enterprise, you'll likely encounter the same question:

"Can our business data stay on our own servers?"

This question comes from compliance heads at financial companies, CTOs at healthcare enterprises, technical managers at government departments - they share a common request: data must stay in our own hands.

90% of AskTable enterprise users choose private deployment.

Enterprise data security concerns typically come from three aspects:

AskTable private deployment's core principle is only one: all data remains in the enterprise's own environment.

Specifically:

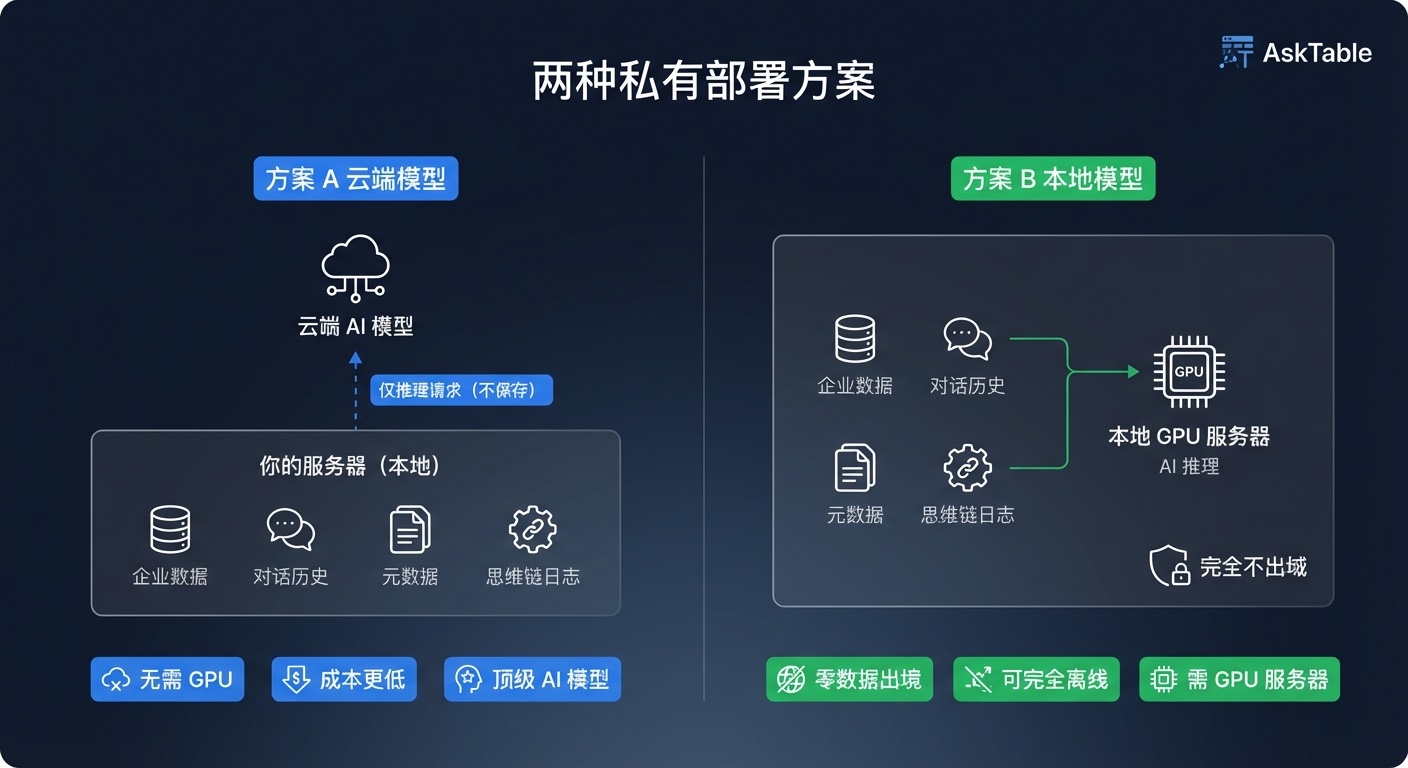

AskTable's private deployment is divided into two solutions, with core difference being whether AI model runs on cloud or on-premises.

This is what most enterprises choose.

All data stored locally, AI inference calls cloud models (like Claude, GPT, Qwen, etc.), only inference requests sent (not saved), optional SDI desensitization encrypted transmission.

Solution A advantages:

Data doesn't leave the premises, inference doesn't either.

All data stored and processed locally, AI model also runs on local GPU servers.

Solution B characteristics:

Solution A doesn't need GPU servers, just a regular server.

| Configuration | Small team (5-50 people) | Large team (50-200+ people) |

|---|---|---|

| CPU | 8 cores | 16 cores+ |

| Memory | 16 GB | 32 GB+ |

| Storage | 200 GB SSD | 500 GB+ SSD |

| Network | 100 Mbps | 1 Gbps+ |

| GPU | Not needed | Not needed |

Large teams recommended to split PostgreSQL, Redis, Qdrant and other infrastructure to different servers.

Solution B needs, in addition to application servers, a GPU server to run local AI models (starting model: Qwen 235B).

Application server configuration (same as Solution A):

| Configuration | Small team (5-50 people) | Large team (50-200+ people) |

|---|---|---|

| CPU | 8 cores | 16 cores+ |

| Memory | 16 GB | 32 GB+ |

| Storage | 200 GB SSD | 500 GB+ SSD |

| Network | 100 Mbps | 1 Gbps+ |

| GPU | Not needed | Not needed |

GPU server configuration (running Qwen 235B model):

| Configuration | Small team (5-50 people) | Large team (50-200+ people) |

|---|---|---|

| GPU | 4× A100 80GB | 8× A100 80GB or H100 |

| Total VRAM | 320 GB | 640 GB+ |

| CPU | 16 cores+ | 32 cores+ |

| Memory | 64 GB | 128 GB+ |

| Storage | 1 TB NVMe SSD (including model files) | 2 TB+ NVMe SSD |

| Network | 1 Gbps | 10 Gbps (multi-card communication) |

AskTable private deployment offers multiple editions covering different team size needs, supporting annual subscription and permanent license.

For detailed edition, pricing and licensing information, please check the Pricing page.

AskTable private deployment supports two deployment locations:

Deploy in your own cloud account, data and control all under your cloud account.

Recommended: Alibaba Cloud One-Click Deployment

Through Alibaba Cloud Market, you can quickly deploy a complete AskTable private environment:

Alibaba Cloud Market One-Click Deployment

Alternative: Sealos One-Click Deployment

Using Sealos cloud operating system, you can also achieve quick deployment:

Cloud deployment recommendation: Suggest using cloud AI models (Solution A) directly, fully utilizing cloud network advantages while maintaining data security within your own cloud account.

Deploy in your own data center or private cloud environment, suitable for enterprises with complete IT infrastructure.

On-premises deployment recommendation: Also suggest using cloud AI models (Solution A), ensuring transmission security through SDI desensitization encryption. For particularly sensitive scenarios, consider upgrading to Solution B.

View On-Premises IDC Deployment Documentation

| Dimension | Cloud Private Deployment | On-Premises IDC Deployment |

|---|---|---|

| Infrastructure | Cloud provider | Self-built data center |

| Deployment | Alibaba Cloud / Sealos one-click | Unified package Docker Compose |

| Operations complexity | Low (cloud platform manages infrastructure) | Medium (self-maintain hardware/network) |

| Elastic scaling | High (can adjust cloud resources anytime) | Medium (need hardware procurement) |

| Suitable for | Teams without complete IT infrastructure | Enterprises with IDC and operations team |

| Recommended solution | Solution A (cloud model) | Solution A or B both ok |

WeChat Work integration requires private deployment version only. SaaS version cannot integrate with WeChat Work.

WeChat Work integration requires deploying services within the enterprise's internal network to interface with WeChat Work APIs. This inherently requires a private deployment environment - SaaS version runs on cloud and cannot directly connect with enterprise internal networks.

| Integration Method | Description |

|---|---|

| WeChat Work | Initiate data queries in WeChat Work, @ bot to get data |

| DingTalk / Feishu | Deep integration with enterprise communication tools |

| Access via mini program or official account | |

| HTTP RESTful API | Complete API interface, supports custom integration |

| Python SDK | Call AskTable capabilities through code |

| Embed in webpage | Embed in自有 pages via iframe or SDK |

| Integrate with Coze / Dify / FastGPT | Connect with mainstream AI platforms |

Quick decision based on your actual situation:

Step 1: Confirm data security requirements

Step 2: Choose AI model solution

Step 3: Choose deployment location

Step 4: Choose edition

View Pricing page for detailed editions and pricing.

Related Reading:

Contact Us:

sidebar.noProgrammingNeeded

sidebar.startFreeTrial