sidebar.joinAskTableCommunity

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

sidebar.wechat

sidebar.feishu

sidebar.chooseYourWayToJoin

Imagine this scenario:

On Monday morning, you ask an AI assistant: "How were sales in East China last week?" It checks the data and gives you the result. You add: "From now on, for this kind of sales data, please include month-over-month comparison. Our team prefers to see that." The AI says okay.

On Wednesday, you ask: "How are things in South China this week?"

Then you discover—it gives you another plain number, with no comparison, no context. As if that Monday conversation never happened.

This isn't a story about "poor memory"—it's the true portrayal of most current AI data analysis tools.

Each conversation starts from scratch, like an employee who's always in their first week: you repeatedly explain business terminology, correct output formats, and tell it "we don't look at absolute values, we look at trends." It's indeed smart, but this intelligence can't accumulate.

AskTable is changing this.

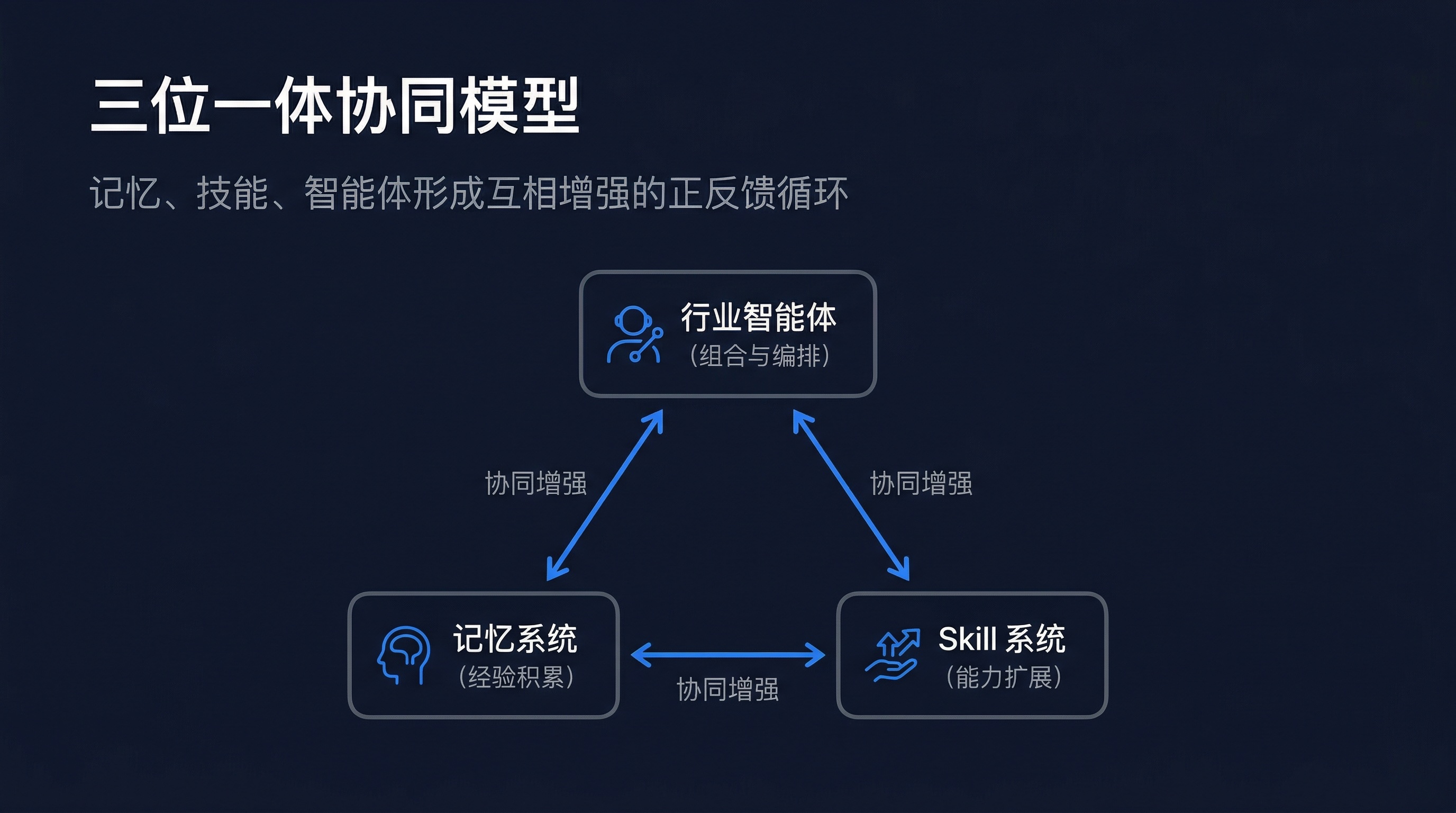

Through the synergy of three core capabilities—Memory System, Skill System, and Industry Agents—AskTable is transforming AI from a "always a newcomer" tool into a continuously growing data team partner.

In data analysis scenarios, a general AI assistant faces three fundamental shortcomings:

| Gap | Specific Manifestation | User Experience |

|---|---|---|

| Memory Gap | Previous preferences, corrected errors, and established context are all lost | "I have to teach it everything again each time" |

| Capability Gap | Can only do general Q&A, can't execute professional analysis tasks | "It can chat about anything, but nothing well" |

| Cognitive Gap | Doesn't understand industry terminology, business logic, or analysis frameworks | "It doesn't understand our industry" |

AskTable's approach isn't about patching individual points, but building three mutually supportive capability pillars at the system level:

These three capabilities aren't simply叠加—they form a positive feedback loop: memory makes agents understand users better, Skills make agents more capable, and agent usage in turn generates new memory and skill optimization.

Let's break down these three pillars one by one.

The core problem the memory system solves is: keeping AI coherent across different sessions.

This isn't simply "saving chat history," but selectively extracting, storing, and retrieving information valuable to users:

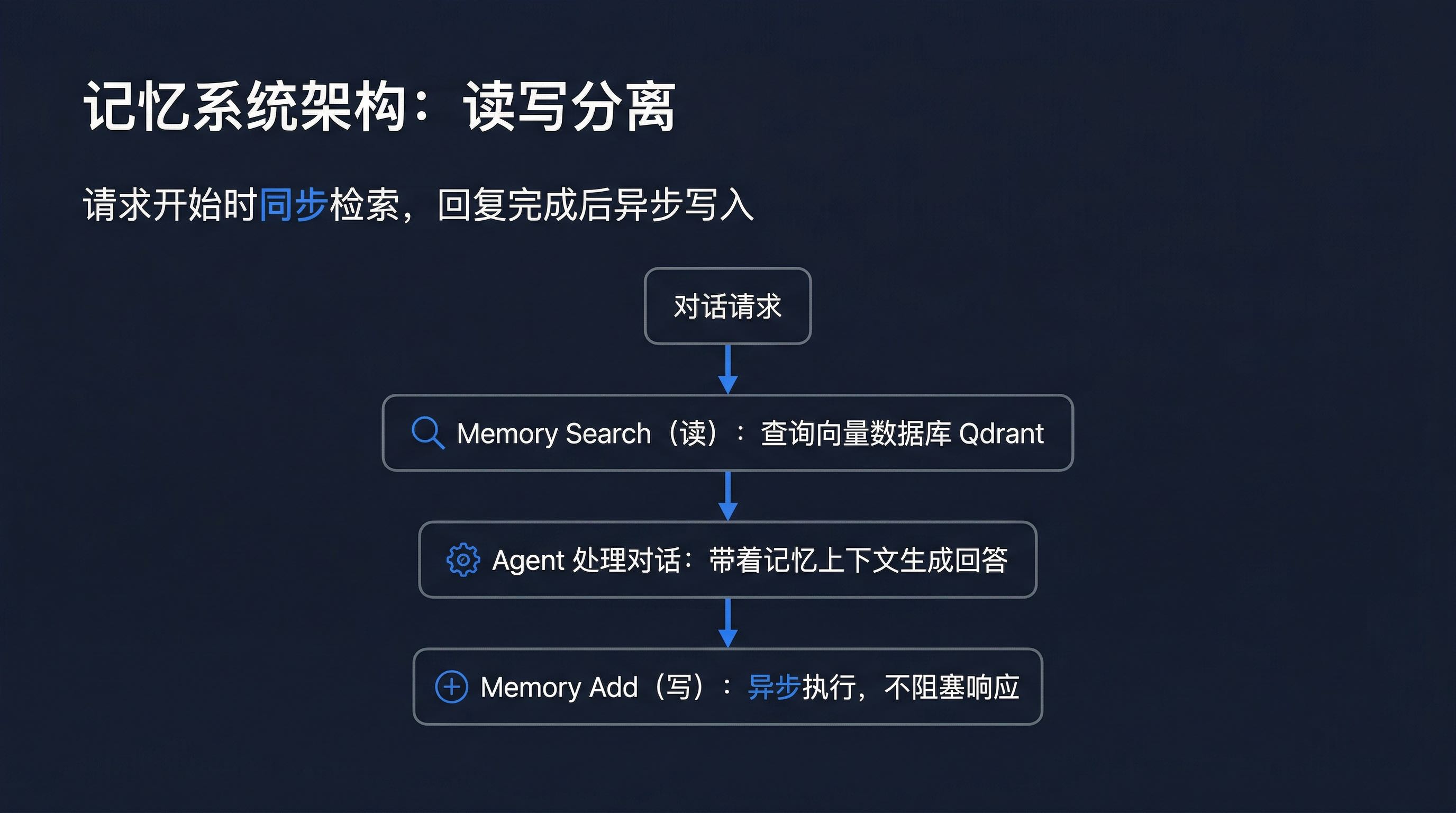

The memory system is built on mem0 + Qdrant, using a read-write separation design:

Key Design Decisions:

Read-Write Separation: Search executes synchronously at the start of a request, Add executes asynchronously after the reply completes, ensuring no impact on response speed.

Agent-Level Isolation: The memory isolation granularity is at the Data Agent level, not user level. This means all users under the same Data Agent (such as "Sales Analysis Assistant") share memory, forming team-level collective memory.

Protocol Abstraction Layer: The memory system is defined through Protocol interfaces, with mem0 as the current implementation. This ensures seamless switching to other memory solutions in the future without affecting upper-layer logic.

LLM-Driven Memory Extraction: Instead of simply saving conversation text, structured memory information (preferences, facts, corrections) is extracted from conversations through LLM, deduplicated, and stored in a vector database.

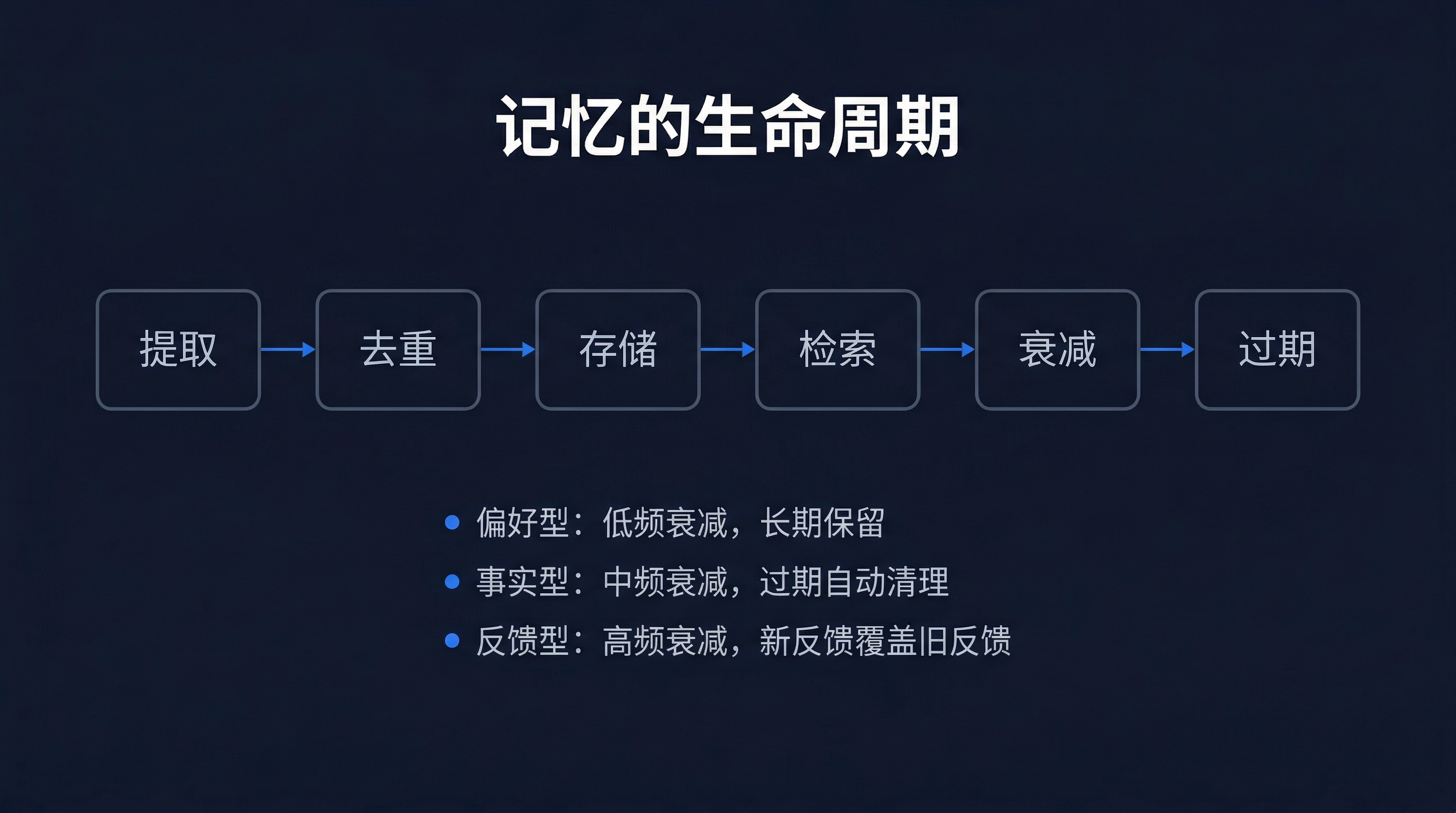

Memory isn't simply "stored" and "retrieved"—it has complete lifecycle management:

Three Types of Memory Extraction:

| Type | Example | Decay Strategy |

|---|---|---|

| Preference | "I like to see MoM data" | Low-frequency decay, long-term retention |

| Fact | "Q1 target is 50 million" | Medium-frequency decay, auto-cleanup when expired |

| Feedback | "This analysis doesn't need tables" | High-frequency decay, new feedback overwrites old |

This classification ensures the memory system doesn't grow infinitely, while important long-term preferences are retained.

The memory system is defined through Protocol interfaces, not directly dependent on mem0's concrete implementation:

class MemoryProvider(Protocol):

"""Memory provider protocol—ensuring replaceability"""

async def search(

self,

query: str,

agent_id: str,

limit: int = 5,

) -> list[MemoryEntry]:

"""Retrieve relevant memories based on query"""

...

async def add(

self,

messages: list[Message],

agent_id: str,

) -> list[MemoryEntry]:

"""Extract and store new memories from conversations"""

...

async def get_all(self, agent_id: str) -> list[MemoryEntry]:

"""Get all memories under an Agent"""

...

async def delete(self, memory_id: str) -> bool:

"""Delete specified memory"""

...

# Current implementation: mem0

class Mem0MemoryProvider:

def __init__(self, config: Mem0Config):

self.memory = AsyncMemory.from_config(config)

async def search(self, query, agent_id, limit=5):

results = await self.memory.search(

query=query,

user_id=agent_id,

limit=limit,

)

return [self._to_entry(r) for r in results]

# ... other method implementations

This means if there's a need to switch to a self-developed memory solution or other open-source solution in the future, only the same Protocol interface needs to be implemented, with no modifications to upper-layer code.

Here's a comparison:

Without the memory system:

Conversation A (Monday):

User: Sales in East China

AI: 12 million

User: From now on, include YoY and MoM for this kind of data

AI: Okay

Conversation B (Wednesday):

User: Sales in South China

AI: 8 million ← No YoY or MoM

With the memory system:

Conversation A (Monday):

User: Sales in East China

AI: 12 million

User: From now on, include YoY and MoM for this kind of data

AI: Okay → Memory system extracts and stores:

"User preference: Sales data needs YoY and MoM"

Conversation B (Wednesday):

User: Sales in South China

AI: 8 million, YoY +12%, MoM -3% ← Automatically applies preference

Note: MoM decline mainly affected by XX category

The meaning of the memory system: AI no longer needs you to teach it repeatedly. It沉淀 every valuable piece of information from each interaction and automatically calls it up in the next conversation.

For technical details on the memory system, recommended reading: AskTable Permanent Memory System: Letting AI Remember Every Conversation

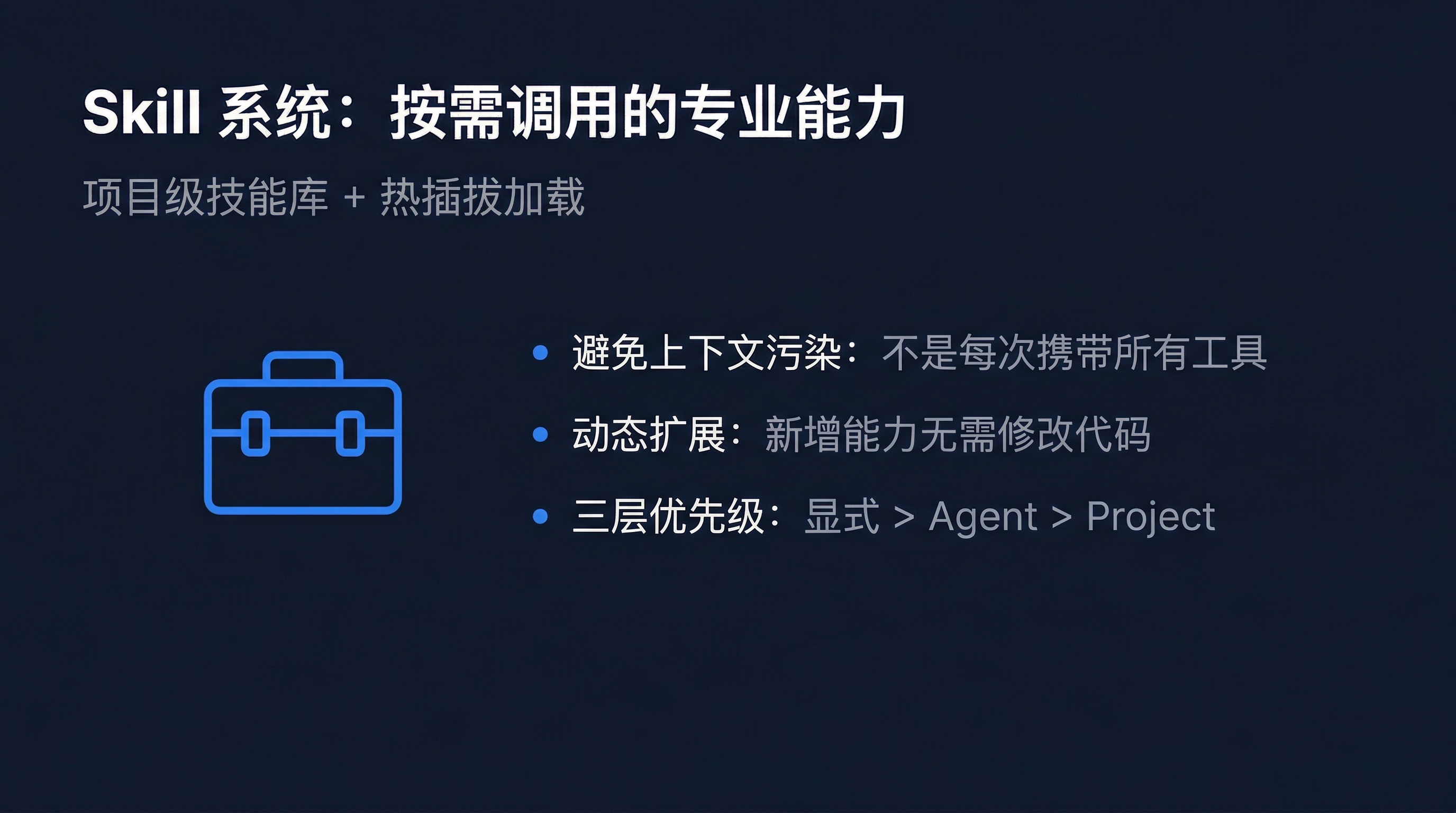

If the memory system solves AI's "experience accumulation" problem, then the Skill system solves the "professional capability" problem.

Traditional Agent approaches stuff all capabilities into system prompts or tool lists. This creates two problems:

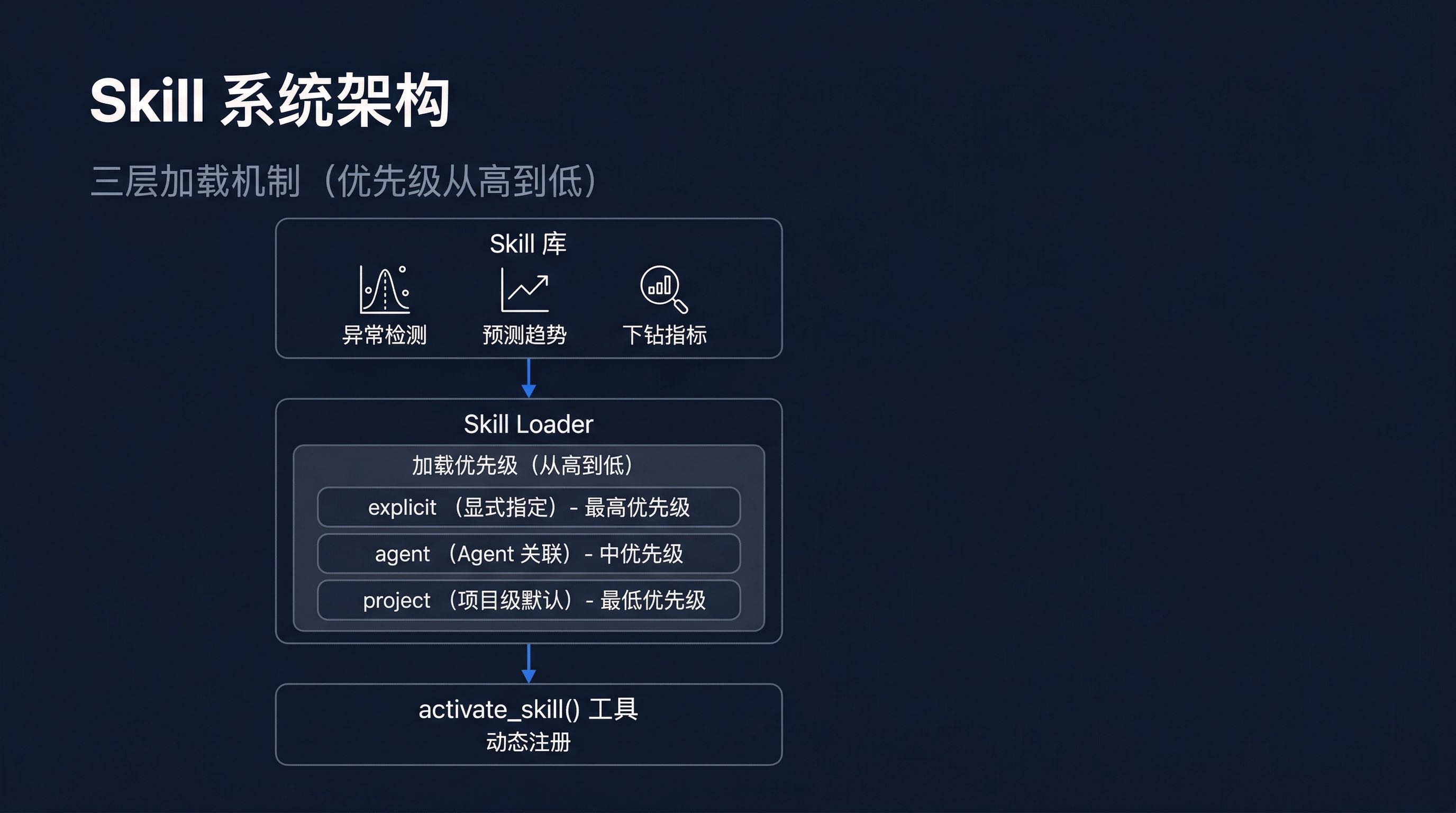

AskTable's Skill system uses a more elegant approach: project-level skill library + hot-swappable loading.

Core Design Points:

activate_skill() tool, Agents can activate skills on-demand during conversations, expanding their capability boundariesThe three-layer loading mechanism is one of the core innovations in the Skill system. It ensures that in different scenarios, Agents can get the most appropriate skill set:

The meaning of this design: neither forcing Agents to carry all skills every time (avoiding context pollution), nor sacrificing capability availability when needed.

AskTable has 11 built-in professional data analysis skills, covering the complete analysis chain from data exploration to report generation:

| # | Skill Name | Core Capability | Typical Scenario |

|---|---|---|---|

| 01 | Anomaly Detection | Auto-identify data anomalies | Discover sudden sales drops |

| 02 | Prediction Trend | Predict trends based on history | Forecast next month's revenue |

| 03 | Drill-Down Metrics | Multi-dimensional layer-by-layer breakdown | Find specific problem causes |

| 04 | Comparative Analysis | YoY/MoM/horizontal-vertical comparison | Store performance ranking comparison |

| 05 | Attribution Analysis | Quantify each factor's contribution | Attribute sales decline |

| 06 | Stress Testing | Multi-scenario simulation | "What if raw material prices rise 10%" |

| 07 | Cycle Analysis | Identify seasonal/cyclical patterns | Discover monthly/quarterly patterns |

| 08 | Report Orchestration | Auto-generate structured analysis reports | Monthly operations report |

| 09 | Metric Interpretation | Business perspective on metric meanings | Explain data to non-technical people |

| 10 | Data Quality Detection | Auto-discover data anomalies and missing values | Monitor data quality |

| 11 | Business Language Generation | Convert data conclusions to business language | Generate management-readable conclusions |

These 11 skills are like a analysis team's "standard arsenal"—each skill is a core capability of a professional data analyst.

For in-depth analysis of the Skill system, recommended reading: AskTable Skill System: Letting AI Agents Call Professional Capabilities On Demand

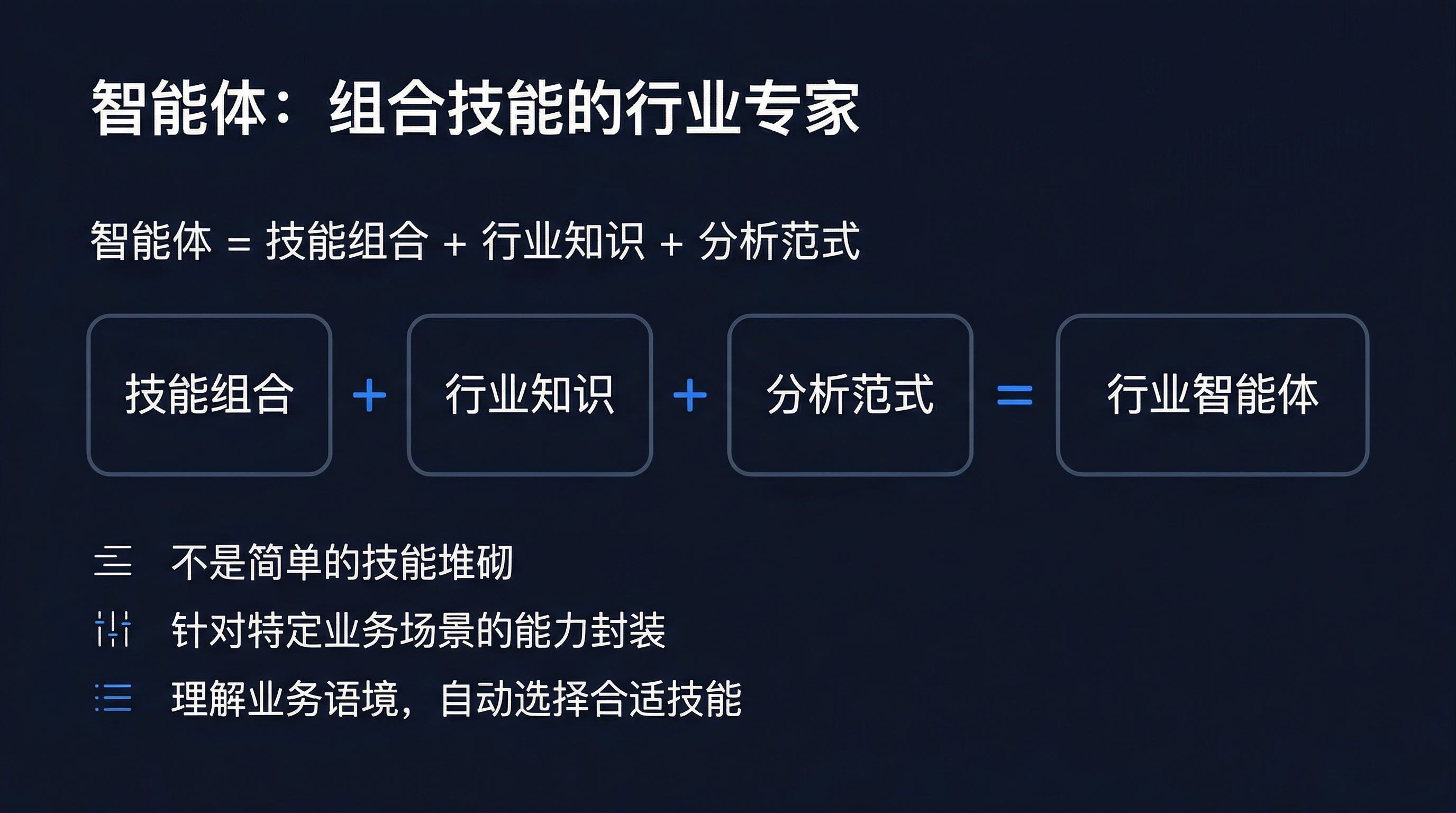

With memory and skills, the next step is how to organize them into a "knowledgeable" expert. This is the value of industry agents.

Agents aren't simply a stack of skills, but an encapsulation of skill combinations + industry knowledge + analysis frameworks for specific business scenarios.

AskTable comes with 9 pre-built industry agents, covering the most common data analysis scenarios:

| Agent | Positioning | Core Scenarios |

|---|---|---|

| Retail Operations Analyst | Retail store performance monitoring and analysis | Store performance anomaly detection, category analysis, store manager operation suggestions |

| E-commerce Data Monitor | E-commerce platform real-time data monitoring | GMV tracking, conversion rate monitoring, traffic source analysis |

| Financial Data Analyst | Financial statement analysis and interpretation | Income statement analysis, cost structure breakdown, budget execution monitoring |

| Market Insight Analyst | Market trends and competitive analysis | Market share, competitor benchmarking, consumer insights |

| Supply Chain Monitor | Full supply chain monitoring | Inventory early warning, delivery cycle analysis, supplier evaluation |

| User Growth Analyst | User growth and retention analysis | Customer acquisition cost, retention curves, LTV analysis |

| Executive Data Assistant | Management data Q&A | Quick operations metric queries, executive dashboard generation |

| Traffic Light Analyst | Operations health early warning | Metrics traffic light system, risk alerts, improvement suggestions |

| Data Quality Guardian | Data quality monitoring and governance | Anomaly data detection, completeness verification, quality reports |

The core value of agents is understanding your business context and automatically selecting the right skill combination.

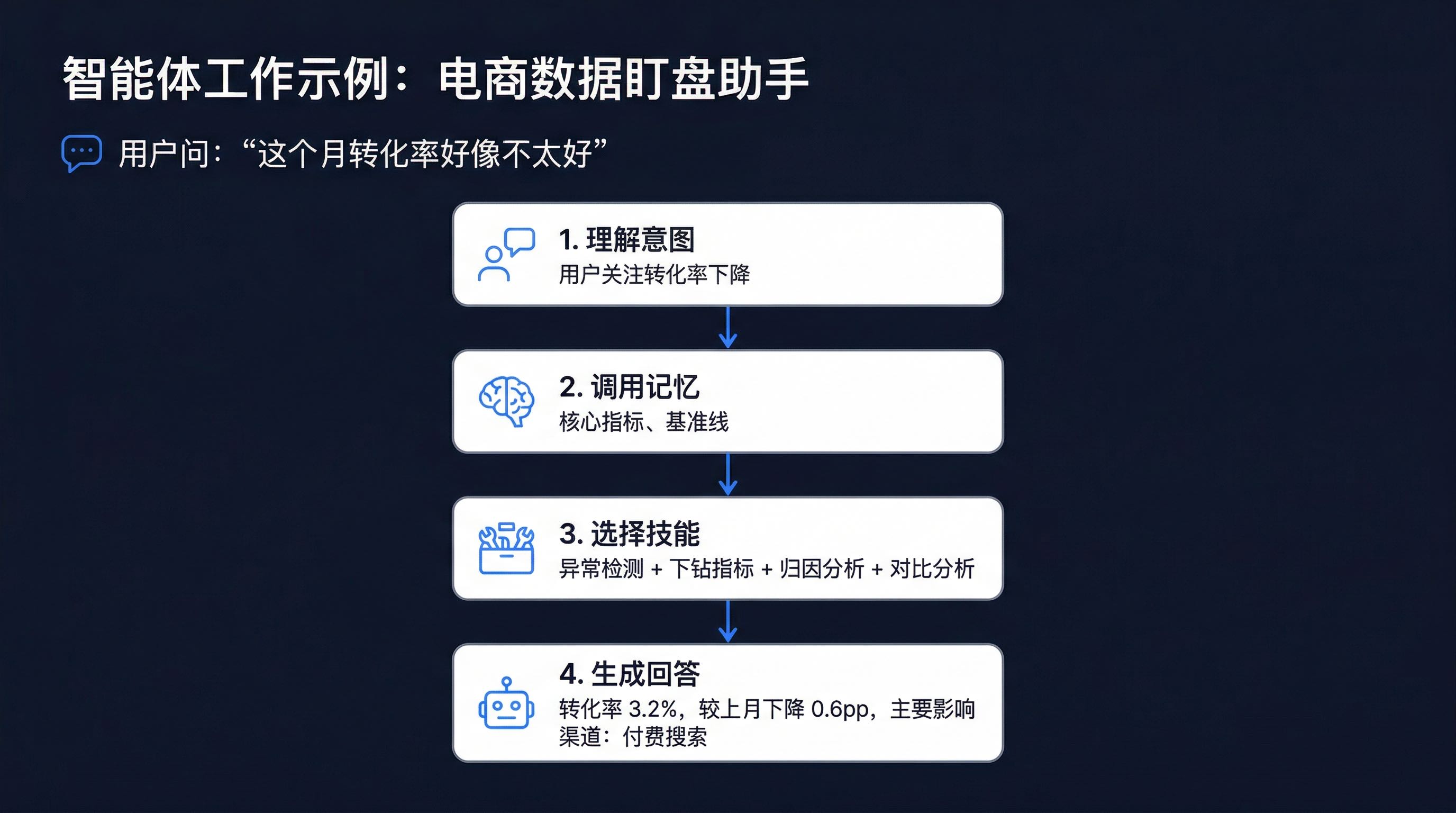

Take an e-commerce operations scenario as an example:

The reason agents can do this is because they:

For complete introduction to built-in skills and agents, recommended reading: AskTable Built-in Skills and Agents: Out-of-the-Box Data Analysis Capabilities

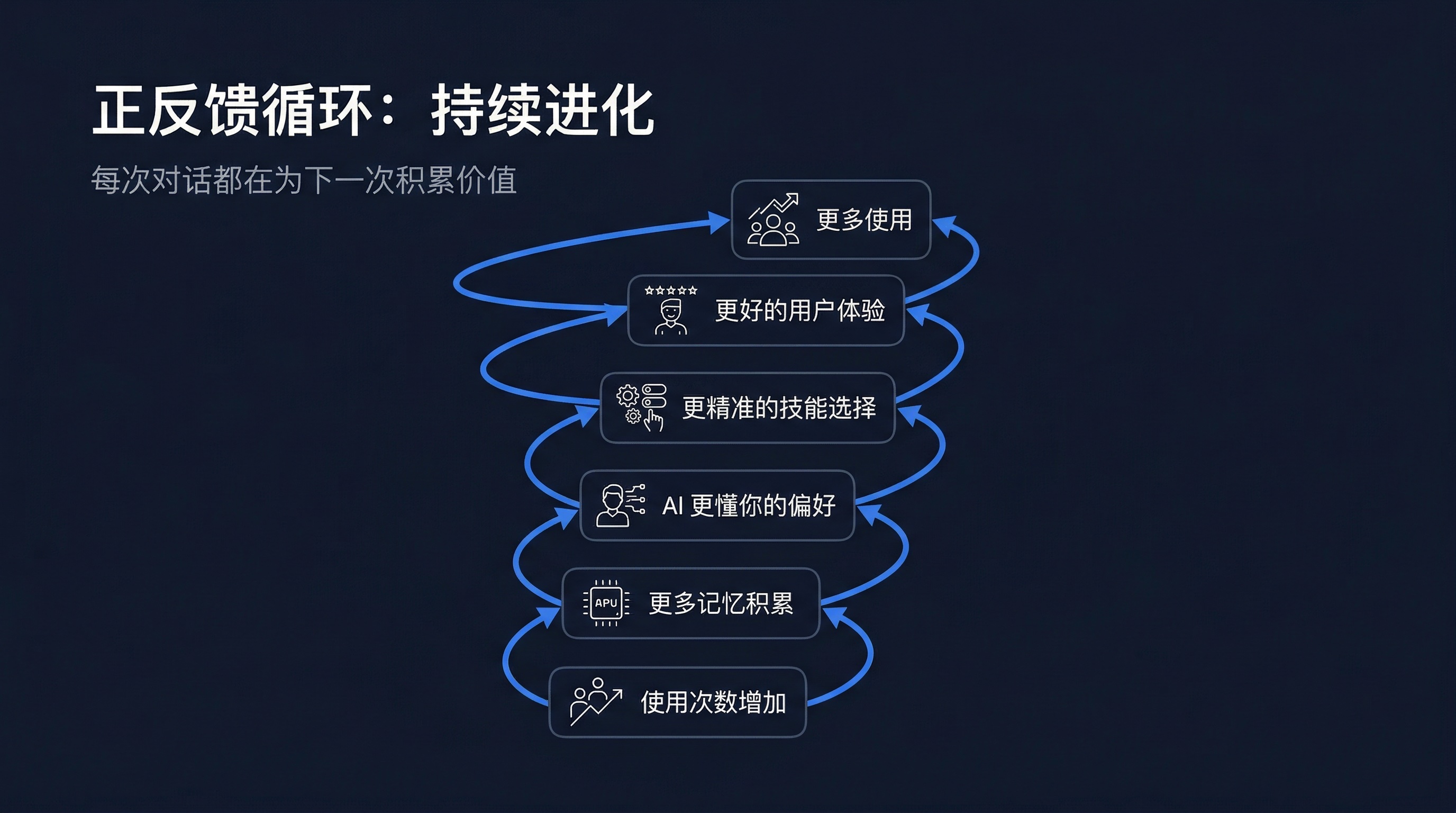

Memory, Skills, and Agents don't work independently—they form a mutually reinforcing positive feedback loop:

Let's look at a complete usage scenario to see how the three work together:

Scenario: A retail enterprise operations director uses AskTable for monthly operations analysis

═══════════════════════════════════════════════════════════════

Step 1: User Initiates Request

───────────────────────────────────────────────────────────────

User: "Show me last month's operations situation"

═══════════════════════════════════════════════════════════════

Step 2: Memory System Involvement (Read)

───────────────────────────────────────────────────────────────

Memory search discovers:

✓ User's Data Agent is "Executive Data Assistant"

✓ User preference: focuses on revenue, profit margin, YoY and MoM

✓ Last correction: "Use previous month for MoM, not moving average"

✓ Business context: Q1 revenue target 50 million, currently at 62%

→ This information is injected into the system prompt

═══════════════════════════════════════════════════════════════

Step 3: Agent Selects Skills

───────────────────────────────────────────────────────────────

The "Executive Data Assistant" agent, after analyzing user needs, decides to activate:

activate_skill("Cycle Analysis") → Identify last month's overall trend

activate_skill("Comparative Analysis") → YoY, MoM, target achievement

activate_skill("Anomaly Detection") → Check for any anomalous fluctuations

activate_skill("Drill-Down Metrics") → Locate main growth/decline sources

activate_skill("Business Language Generation") → Generate management-readable conclusions

═══════════════════════════════════════════════════════════════

Step 4: Execute Analysis

───────────────────────────────────────────────────────────────

AI executes skills in sequence to generate structured analysis:

📊 Last Month Operations Summary

━━━━━━━━━━━━━━━━━━━━━━━━

Revenue: 18.5M | YoY +15% | MoM +8%

Profit Margin: 18.5% | YoY +1.2pp | MoM -0.3pp

Q1 Target Achievement: 62% (on track)

📈 Key Findings:

01. Revenue growth mainly driven by new products in East China (contribution rate 65%)

02. Profit margin MoM slight decline, mainly due to raw material cost increase

03. South China performance abnormally low (2.1 standard deviations below mean)

⚠️ Risk Alert:

South China declining for two consecutive weeks, needs attention

═══════════════════════════════════════════════════════════════

Step 5: User Interaction and Feedback

───────────────────────────────────────────────────────────────

User: "That South China anomaly, help me focus on which stores have the problem"

→ Memory system records user's focus

→ Agent activates "Drill-Down Metrics" and "Anomaly Detection" skills

→ Generate detailed analysis

═══════════════════════════════════════════════════════════════

Step 6: Memory System Write (Async)

───────────────────────────────────────────────────────────────

After conversation ends, memory system async executes:

✓ Extract: User focuses on anomalous stores

✓ Extract: User continuously tracking South China performance

✓ Update: South China as current focus area

→ Next conversation, AI will proactively focus on South China

═══════════════════════════════════════════════════════════════

The core advantage of this system is that it's a self-reinforcing positive feedback loop:

Every conversation accumulates value for the next. This is the true meaning of a "team that grows."

A mid-sized retail enterprise (annual revenue ~200M), changes after three months of using AskTable:

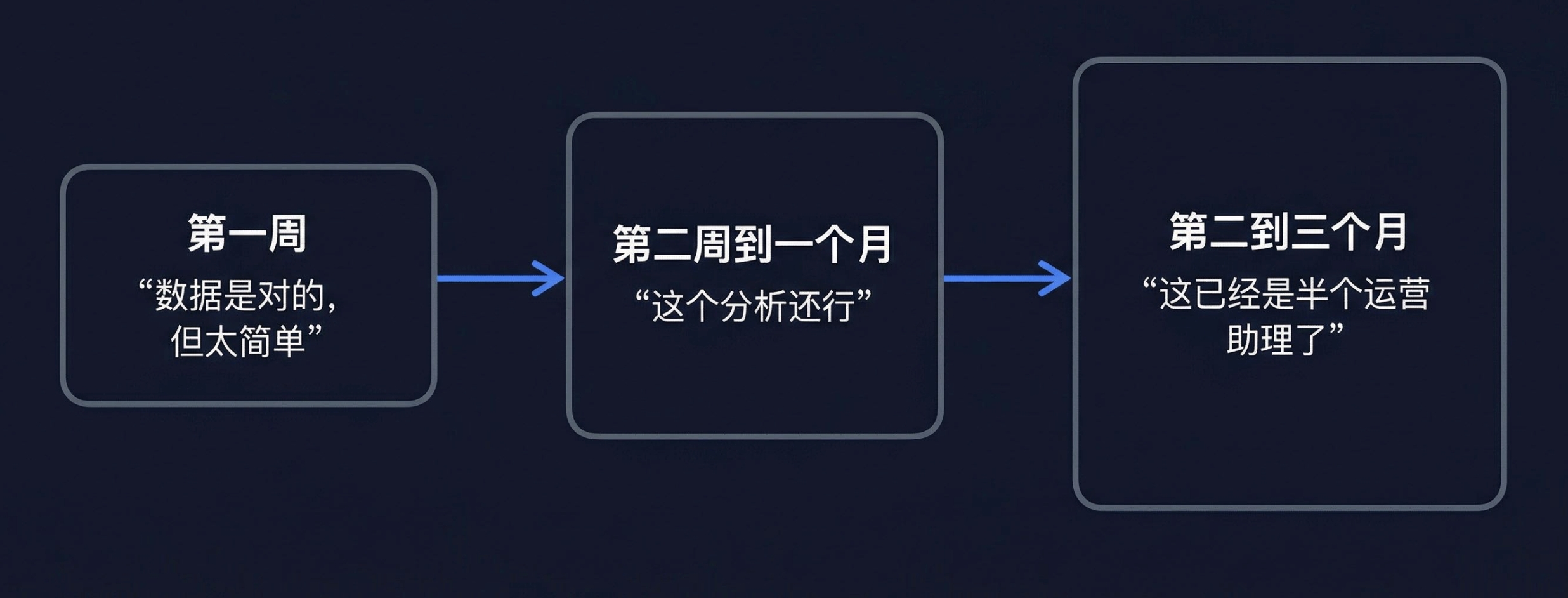

Week 1: Tool Stage

Operations Director Li Ming used AskTable for the first time, treating it as "a chatbot that can query data."

Li Ming: "Sales by region last month"

AI: "East 5.8M, South 3.2M, North 4.1M, Southwest 1.9M"

Li Ming: (thinking) Okay, data is correct, but too simple

At this point, AI is similar to an intern who can write SQL.

Week 2 to Month 1: Learning Stage

Li Ming started giving AI feedback:

Li Ming: "From now on, include YoY and MoM for this kind of data"

AI: "Okay"

Li Ming: "Why is South China so bad this month?"

AI: (calls Anomaly Detection + Drill-Down analysis)

"South China 3.2M, MoM down 22%, YoY down 8%

Main drag: Guangzhou Tianhe store (-45%), Shenzhen Nanshan store (-31%)

Common factor: both stores had renovation downtime"

Li Ming: (thinking) This analysis is pretty good

Memory system started working—Li Ming's preferences, focus areas, and analysis habits were recorded.

Month 2 to 3: Colleague Stage

Li Ming: "This month's operations situation"

AI: "📊 March Operations Express

━━━━━━━━━━━━━━━

Revenue 18.5M, YoY +15%, MoM +8%

Profit Margin 18.5%, YoY +1.2pp

Q1 Target Achievement 62%, on track

📈 Highlights:

East China new product series drove 12% revenue growth

⚠️ Attention:

South China still recovering, but MoM up 5%

(Tianhe store you focused on last month is back to normal operations)

Should I generate a detailed monthly operations report?"

Li Ming: (thinking) This is already half an operations assistant

At this point, AI:

This is the transformation from "tool" to "colleague."

| Dimension | Early Use | After Three Months |

|---|---|---|

| Average queries per session | 4-6 rounds | 1-2 rounds |

| Monthly operations report generation | Half day → 3 minutes | 1 minute (AI proactively generates) |

| Data anomaly discovery time | Found at weekly meeting | Proactive alert same day |

| Li Ming's trust in AI | "Need to verify its data" | "Can basically use directly" |

Information stored in the memory system directly affects agent skill selection:

# Pseudocode: Memory-enhanced skill selection

async def select_skills_with_memory(agent, user_query):

# 1. Retrieve relevant context from memory system

memories = await memory.search(user_query, agent_id=agent.id)

# 2. Inject memory into prompt

enhanced_prompt = build_prompt(

base_prompt=agent.system_prompt,

memories=memories, # Contains user preferences and historical focus

)

# 3. Agent selects skills based on enhanced prompt

selected_skills = await agent.decide_skills(

query=user_query,

prompt=enhanced_prompt,

)

# 4. Activate selected skills

for skill in selected_skills:

await agent.activate_skill(skill)

return selected_skills

Skill execution results and user feedback become new memories:

User asks: "Show me last month's operations"

│

├── AI calls multiple skills to generate report

│

├── User feedback: "I want to see South China data weekly"

│ │

│ └── Memory system extracts:

│ "User wants to track South China data weekly"

│

└── Next time user asks about operations

│

└── AI proactively includes South China weekly analysis

The agent is the "brain" of the entire system, responsible for:

┌─────────────────────────────────────────────────┐

│ Agent Scheduling Flow │

│ │

│ Receive user request │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Understand user intent │ │

│ └────────┬────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Retrieve memory context │ ← Read memory │

│ └────────┬────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Select related skills │ ← Use Skill │

│ └────────┬────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Execute analysis and reply │ │

│ └────────┬────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────┐ │

│ │ Async write new memory │ ← Write memory │

│ └────────┬────────┘ │

│ │

└─────────────────────────────────────────────────┘

Traditional data analysis tools are essentially "query engines"—you input questions, it returns data. Each interaction is independent, with no accumulation, no growth.

AskTable's approach is to transform AI data analysis into a **"growing team":

| Dimension | Traditional Tools | AskTable |

|---|---|---|

| Experience | Start from scratch each time | Cross-session accumulation, understands you better with use |

| Capability | Fixed feature set | Dynamically expandable, called on demand |

| Cognition | General Q&A | Industry expert, understands business context |

The design philosophy of this architecture is openness and extensibility:

This means AskTable's capability boundary isn't fixed, but continuously expands with use.

"Good AI tools don't get smarter—they get to know you better."

Reviewing AskTable's three core capabilities:

Memory System: Letting AI stop forgetting. Through mem0 + Qdrant's read-write separation design, accumulating team-level collective memory at the Data Agent level.

Skill System: Letting AI have professional capabilities. Project-level skill library + hot-swappable loading + 11 built-in skills, covering the complete analysis chain from anomaly detection to report generation.

Industry Agents: Letting AI understand the business. 9 major agents combine skills for specific business scenario analysis capabilities, out of the box.

The three work together, forming a continuously evolving positive feedback loop—memory makes agents understand users better, skills make agents more capable, and every agent use generates new memory and skill optimization.

This isn't about building a better query tool—it's about building a data analysis team that grows.

sidebar.noProgrammingNeeded

sidebar.startFreeTrial